Subscribe and you will promptly receive new published articles from the blog by mail

There is a pattern that repeats itself so reliably that it has become almost predictable. A founder generates a marketplace MVP with AI tools – Cursor, Bolt, or ChatGPT – and within weeks has something that demos beautifully. Investors nod. Early users say it looks promising.

Then reality hits.

The first hundred users expose edge cases nobody planned for. Data bleeds between accounts. Search times out. There’s no admin panel for disputes. And when the team tries adding a feature, they find a tangled codebase with no clear architecture.

Only about 5% of generative AI pilot programs achieve rapid revenue growth. The remaining 95% stall with no measurable P&L impact.

It is the default trajectory for an AI Minimum Viable Product built without deliberate engineering judgment. And in the marketplace category specifically, the consequences of structural weakness arrive faster and hit harder than in almost any other type of product.

That’s the real reason why AI marketplace MVPs fail so frequently. Many founders launch an AI MVP and fail within months, unable to reconcile the impressive demo with the collapsing production system.

The biggest mistakes that make AI Minimum Viable Product break early

Weak architecture from the start

Most AI coding assistants optimise for the immediate prompt, not the long-term system. The result is a codebase that works perfectly in a single-user development environment but crumbles when multiple buyers, sellers, and admins interact simultaneously. You get duplicated functions, poorly abstracted components, and non-standard architecture that no experienced developer would knowingly ship.

In marketplace terms, this means your platform might handle one transaction smoothly but fail entirely when ten happen at once. Database queries that ran in 200ms at launch stretch to 5+ seconds under load because AI didn’t optimise for the join-heavy queries that marketplaces demand. This is the classic AI MVP fail – not because the product idea was wrong, but because the foundation was never built to support it.

There is a less visible but more damaging risk: data exposure. Developers routinely paste real API keys, database schemas, and environment files into AI assistants to get quicker results.

That data doesn’t stay private – it passes through third-party servers, gets stored in prompt logs, and can end up in model-training pipelines you have no control over.

For a marketplace processing real payments, one leaked credential can mean regulatory fines and lawsuits that no hotfix will prevent.

The generated code itself creates a second problem: AI routinely skips or misconfigures access controls, leaving admin panels and payout endpoints exposed without any obvious warning sign.

Oversimplified marketplace workflows

Marketplaces are deceptively complex. A typical transaction involves inventory reservation, payment holds, commission splits, refund logic, and multi-party notifications. AI-generated MVPs tend to hardcode happy paths. They skip edge cases entirely.

You might discover, months into operation, that your platform processes a refund but doesn’t restore the seller’s inventory count. Or that a buyer can “purchase” the same unique item twice because the AI never implemented optimistic locking. These oversights aren’t immediately visible but surface in production as another AI MVP fail.

Messy user roles and permissions

Marketplaces have at least three distinct user types – buyers, sellers, and administrators – each requiring granular, context-specific permissions. AI-generated code rarely gets this right out of the box. Permission logic frequently fails silently, letting unauthorised users access restricted data or administrative functions.

Underestimated search and listing logic

Marketplace search is not a simple keyword match. It requires faceted filtering, relevance ranking, geospatial queries, and often real-time availability checks. AI-generated MVPs typically implement the simplest possible search – a single SQL LIKE query – which performs acceptably with 100 listings but grinds to a halt at 10,000.

Then there’s listing management. Sellers need to create, update, pause, duplicate, and delete listings, each with validations that depend on the listing state and the seller’s account status. AI code rarely models these state machines properly. The result is listings that get “stuck” in intermediate states, requiring manual database fixes – a brittle, unscalable operational burden.

Underbuilt admin and operations

When founders use AI to generate an MVP, they naturally focus on the buyer and seller experience. Admin capabilities – the tools your operations team needs to manage disputes, moderate content, process refunds, and analyse platform health – get neglected entirely.

Most AI-generated marketplace MVPs ship with zero admin functionality beyond what came with the framework. That means every customer support issue becomes a direct database query or a manual script. It doesn’t AI MVP scale because the human operational cost rises linearly with every new user.

One marketplace we audited had its founder spending 15 hours a week manually processing refunds because the platform lacked even a basic admin panel. These are severe AI MVP technical issues that drain founder time and erode user confidence long before the platform ever reaches critical mass.

Hidden technical debt

AI coding assistants produce what can only be described as invisible technical debt. In traditional development, teams make conscious trade-offs: “We’ll hardcode this for now and fix it post-launch.” With AI, the debt is baked in by an engine that has no conception of maintenance. Structural shortcuts that a human engineer would never accept accumulate silently across thousands of lines of generated code.

And the debt compounds. Every new feature prompt generates more tangled code layered on top of existing structural issues. By the time you hit real scale, your codebase cannot be incrementally improved, only replaced. The hidden cost of AI-Generated MVP is not the initial build; it’s the complete rewrite that becomes inevitable within 12–18 months.

Security and reliability gaps

AI-generated code prioritises functionality over security. Input validation is minimal. Authentication logic is copied from public tutorials. SQL injection vulnerabilities, exposed API keys, and missing rate limiting are not hypothetical risks – they’re routine findings in our code audits.

This is one of the clearest reasons AI-assisted MVPs fail in production environments. The same tools that accelerate development also accelerate the accumulation of dangerous vulnerabilities, simply because security was never part of the prompt.

Georgia Tech’s Vibe Security Radar project tracked 35 CVEs in March 2026 directly attributable to AI coding tools — a near-sixfold increase from 6 in January 2026. Researchers estimate the true count is 5–10x higher across the broader ecosystem

For marketplaces, which inherently handle PII (personal identifiable information), payment data, and sometimes identity verification, these gaps are existential. A single data breach doesn’t just cause downtime; it destroys the trust between buyers and sellers that a marketplace depends on. These aren’t minor AI MVP problems – they are business-ending events waiting to happen.

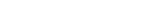

Why these AI MVP scaling mistakes stop a marketplace from growing

Growth exposes weak foundations

When a marketplace successfully attracts users, everything breaks at once: the database runs out of connections, memory leaks cause hourly crashes, and response times climb from acceptable to unacceptable. The breaking point for most AI-generated code is around a few thousand of users – but for marketplaces, with their inherently chatty transaction patterns, the threshold is much lower. This is where most founders discover that their AI MVP scale ambitions were built on sand.

The scaling generative AI from MVP problem is fundamentally architectural. Scaling isn’t about adding more servers; it’s about whether the code can handle concurrent operations, whether the data model supports growth, and whether the system degrades gracefully under stress. AI handles none of these concerns by default. An AI MVP fails at scale not because hardware is insufficient, but because the code was never designed for concurrency.

Fragile MVPs slows down product decisions

Feature development on an AI-generated codebase is a nightmare. Because business logic is scattered across dozens of components, even a simple change – like adding email notifications for new orders – can require touching 40+ files and introduce bugs in seemingly unrelated functionality. What should be a day of work becomes three weeks of debugging. This is the painful reality of fragile MVPs: they look complete on the surface but shatter the moment you try to extend them.

This development paralysis means your marketplace cannot respond to user feedback or competitive pressure. The speed advantage of the AI-generated MVP vanishes precisely when speed matters most: after you’ve validated the concept and need to iterate rapidly toward product-market fit. Founders who launch an AI MVP and failed to account for this reality find themselves trapped – unable to ship improvements, watching competitors capture the market they identified first.

Bad implementation distorts validation

The fundamental purpose of an MVP is to test a hypothesis. But when the AI-generated implementation contains bugs, missing workflows, and permission errors, it becomes impossible to distinguish between “users don’t want this feature” and “the feature doesn’t work correctly.”

You might abandon a promising marketplace vertical because AI built a broken version of it – a catastrophic false negative. This is perhaps the most insidious AI MVP fail: not that the product breaks, but that it causes you to make the wrong strategic decision, killing a viable business based on bad data.

Ultimately, an AI MVP scale strategy must be engineered, not generated. Without that, the platform never leaves the state where the MVP ends; it simply collapses.

Where AI helps – and where founders should be careful

What AI is good for

- Rapid prototyping and visual exploration: AI tools like Vercel v0 or Bolt are exceptional for generating UI components and layouts, letting you test the look and feel of your marketplace before committing to real code.

- Boilerplate generation: Authentication scaffolding, basic CRUD endpoints, and simple middleware are commoditised enough that AI can handle them reliably with human review.

- Code explanation and refactoring suggestions: When used as an assistant – not an architect – AI can help developers understand complex code paths and suggest optimisation approaches. The key is that a human makes the final decision.

- Test data and seed scripts: Generating realistic sample users, listings, transactions, and edge case scenarios is an area where AI excels and where mistakes have no production consequences.

What should not be improvised

- Database schema design: Marketplace data models (users, listings, orders, transactions, reviews, messages, disputes) have complex interdependencies that AI cannot reason about holistically. Poor schema decisions create constraints that cascade through every feature forever.

- Payment and transaction logic: The path from “buyer clicks pay” to “seller receives funds” involves idempotency, atomicity, partial failure handling, and regulatory compliance. AI does not understand financial integrity.

- Role-based access control: Permissions in multi-sided platforms must be designed with a thorough understanding of what each actor can and cannot do under every system state. AI-generated permissions are permissive, incomplete, and perilous.

- Search and discovery: Relevance ranking, faceted filtering, synonym handling, and performance at scale require specialised engineering that goes far beyond what an LLM can produce.

- Infrastructure and deployment architecture: CI/CD pipelines, environment management, monitoring, alerting, and horizontal scaling strategies require a platform engineering expertise that no prompt can replace.

A successful AI MVP is the result of deliberate choices, not generative chance. The code must be reviewed, refactored, and hardened by engineers who understand what a marketplace demands at scale.

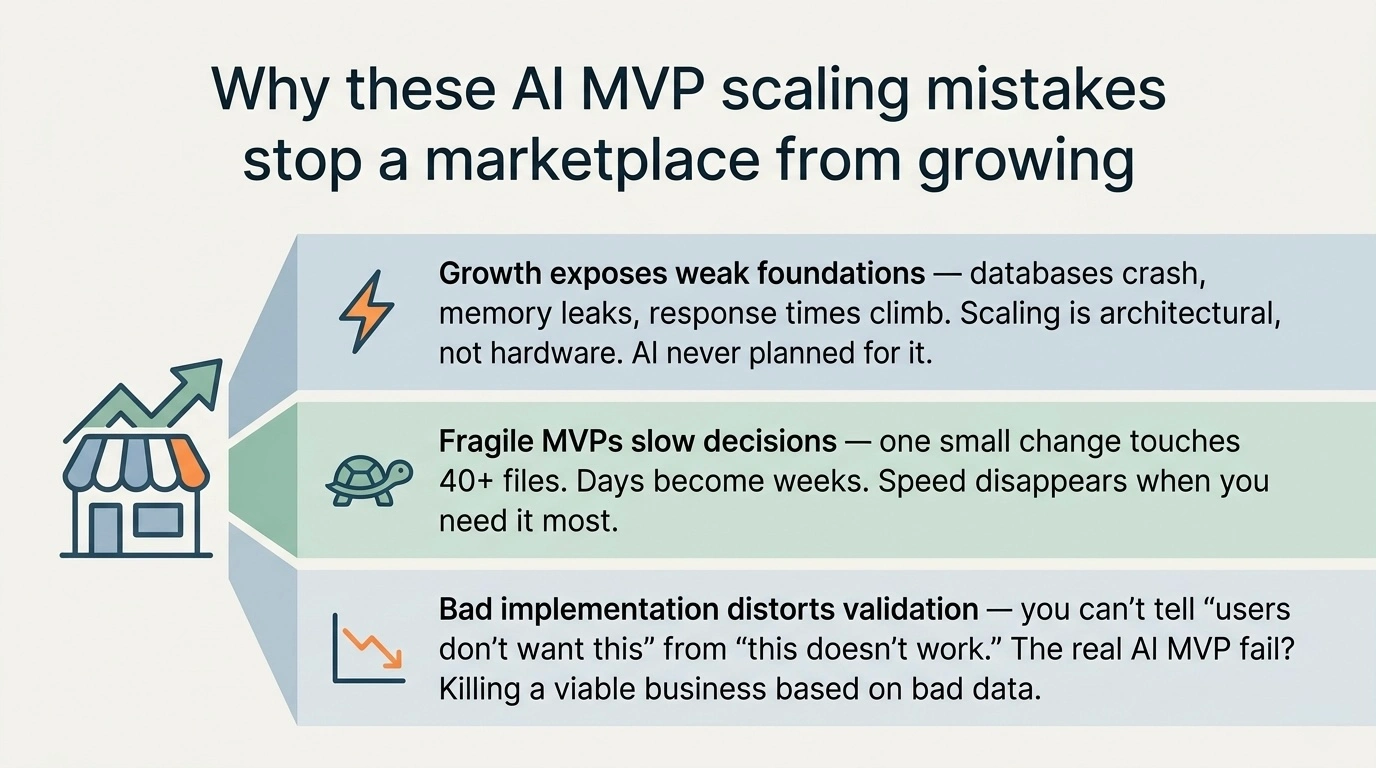

How Roobykon uses AI to prevent early MVP failure and achieve real AI MVP scale

Roobykon Software doesn’t reject AI-generated MVPs. We rebuild them on a foundation that can grow from 100 to 100,000 users without a rewrite – exactly what AI MVP scale demands. Here’s how we do it:

- AI Code Audit & Architecture Assessment: Our senior engineers conduct a comprehensive review of your AI-generated codebase, identifying the structural patterns that cause early failure: missing indexing strategies, leaked resource connections, scattered business logic, and security vulnerabilities. We produce a detailed technical report with prioritised remediation steps.

- Strategic Refactoring Roadmap: We map every critical path in your marketplace – listing creation, order placement, payment processing, dispute resolution – and identify exactly what must be restructured. Our refactoring plans preserve the working parts of your AI-generated MVP while systematically replacing components that can’t meet your growth requirements.

- Scalable Architecture Design: Our architects design a layered architecture with clear separation between presentation, business logic, and data access layers. We implement robust database optimisation – including proper indexing, connection pooling, and query optimisation – so performance degrades gracefully rather than collapsing at scale. This directly prevents the AI-generated MVP failure everyone dreads.

- Security and Compliance Hardening: We audit your entire platform against OWASP Top 10 standards and implement the protections AI overlooks: parameterised queries, proper authentication middleware, rate limiting, and secure credential management. For regulated marketplaces, we ensure GDPR, PCI-DSS, or SOC 2 compliance readiness.

- Operational Readiness: A marketplace needs admin tools, monitoring dashboards, and automated alerts. We build the operational layer that AI never provides, giving your team the visibility and controls needed to manage growth without drowning in manual work.

Great Marketplaces Deserve Better Than an AI MVP Fail

A marketplace is an ecosystem of trust between buyers and sellers. A rushed, generative build betrays that trust the moment a payment glitches or a role permission breaks. When the AI MVP fails, it damages not just your database, but your reputation.

We believe that robust architecture is a competitive advantage. By diagnosing the AI MVP scaling mistakes baked into auto-generated code, Roobykon Software restores the integrity of your platform. We turn MVP ends into chapter beginnings, hardening your infrastructure so that when you’re ready to test your validation hypotheses, the data is clean and the user experience is flawless.

Want to know more?

Roobykon Software builds and scales marketplace platforms for founders who are serious about growth. If you have launched an AI MVP and want to understand what it will take to scale it, we would be glad to talk.

Contact UsRecommended articles

Vibe coding for marketplaces: Where it works and where it fails

Vibe coding for marketplaces: Where it works and where it failsVibe coding is exploding, but marketplaces are harder than they look. Discover the biggest mistakes founders make, how to use AI as an accelerator (not architect), and a safer path to launch.

The Best Marketplace Software for Founders

The Best Marketplace Software for FoundersBeneath every successful multi‑vendor marketplace lies a critical architectural decision. This technical deep dive from Roobykon Software examines the best software to build a marketplace.

How to find a web development partner for your startup: a step-by-step guide

How to find a web development partner for your startup: a step-by-step guideChoosing the wrong development partner can cost you months. Use this expert guide from Roobykon Software to find a trusted web development partner who treats your startup like their own.

How to Build an Online Marketplace Website (Complete Guide for 2026)

How to Build an Online Marketplace Website (Complete Guide for 2026)You have a marketplace idea. Now what? This complete guide answers the questions every founder faces: How do I validate my concept? What features actually matter in an MVP? This article provides practical answers to the challenges of building a marketplace startup from scratch.